Data Orchestration with DataChannel: Benefits, Features & Use Cases

For a long time (like forever) data teams have been wrestling with managing multiple tools, rickety scripts & other assorted patchwork to somehow get data flowing between myriad business systems & databases . The daily hustle is to somehow keep the system going for just another day. No one wants to look at big changes lest it upsets the proverbial data engineering cart. This apprehension towards embracing change is proving to be a significant impediment for data teams, exacting a toll on their efficiency, agility, and costing real dollars.

DataChannel Orchestration emerges as a promising solution precisely tailored to address the persistent challenges faced by data teams. This innovative approach seeks to unravel the complexities entwined with managing disparate tools, convoluted scripts, and the patchwork nature of existing data management systems.

Components of Data Orchestration

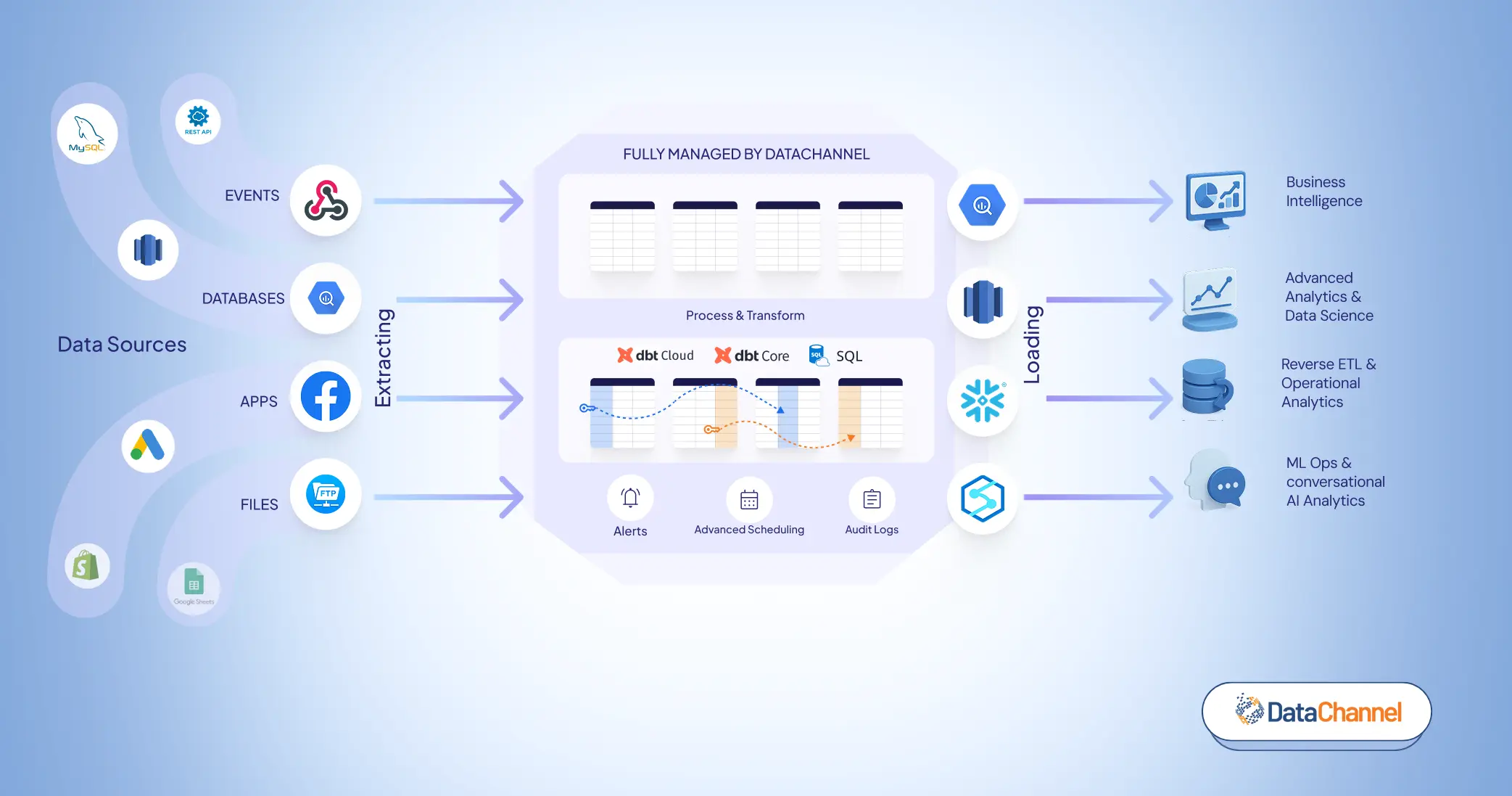

To understand data orchestration, we need to understand the data life cycle of a business, that is, from ingesting data from multiple source systems to making it analytics-ready across BI tools or feeding it to ML/AI models for advanced behavioral predictions and outcomes.

“The cloud orchestration market size is projected to reach $105 million by 2030, registering a CAGR of 21.4%. Growth in demand for optimum resource utilization, need for self-servicing provisioning, and surge in demand for low-cost process setup and automation primarily drive the growth of the global cloud orchestration industry.”

From repetitive manual intervention to self-serving data pipelines, Data Orchestration typically consists of the following steps when it comes to maintaining ELT workflows.

- Data Extraction. Data is collected from raw sources. This might be done directly, by querying your OLTP databases, or via sending data pull requests from third-party APIs. Organizing incoming data from a no. of data sources is a painful task and businesses leverage ELT tools to take care of that part. After organizing data, these tools enable agile data loading into a data warehouse, leading us to the next step.

- Data Loading. Data is stored in storage systems such as databases, data warehouses, or data lakes. After the data loading steps, comes data transformation which involves making data more structured and bringing consistency in its format. The details of data transformation are discussed in the next step.

- Data Transformation. Data is basically cleaned and aggregated. Outliers are removed, and business interpretations are added for example, deduplicating customer data, and making it more coherent after aggregating it over a range of touchpoints and applications. The next step is activating this transformed data and activating it back into SaaS applications.

- Data Activation. A crucial part of data orchestration is making data available for activation i.e., Reverse ETL. When cleaned, consolidated data is sent to downstream tools for immediate use (e.g., building a new audience segment for targeted advertising based on specific age & geography for an upcoming Sales Event).

Benefits of Data Orchestration

Airflow, a pioneering data orchestration tool, has been facing challenges like maintenance, uptime, and complex workflow management features. Second-generation tools like Dagster and Prefect aim to address these issues and enhance upon Airflow's capabilities. But these tools fall more on the technical side of orchestration, requiring users to have good command on coding languages , thus posing a barrier for the non-technical teams. Businesses today need a tool that helps them orchestrate their data workflows with minimum coding required, that’s where DataChannel comes into picture, a low code tool that enables businesses of all sizes to gain control over their data. Some of the advantages that DataChannel offers when it comes to data orchestration, such as:

- Scalability. Data orchestration is a cost-effective way of automating synchronization across data silos, enabling organizations to scale data use.

- Monitoring. Automating data pipelines and enabling alerts for success/ failure allows easy monitoring and also helps in quickly identifying & troubleshooting issues compared to using scripts and disparate monitoring standards.

- Real-time information. Automated data orchestration allows for real-time data analysis or storage since data can be extracted and processed at the moment it’s created.

- Faster insights. Data orchestration streamlines data workflows so you can get business intelligence and actionable insights fast.

Note: This is an early access feature being released to limited users only. To request please fill out the form here.

Use Cases

Interdependency between Tools

Challenge: In a typical data team, members employ different tools for various stages of the workflow (e.g., ELT, transformation using dbt, data activation, and scheduling with tools like Airflow).

Solution: Data orchestration with DataChannel enables seamless scheduling and builds interdependencies between these tools within a single platform. This ensures that downstream data is always up-to-date, and failures trigger immediate notifications. This centralized control prevents the progression of the workflow with incorrect or stale data, fostering data reliability.

Low Code Approach

Challenge: Tools like Airflow require proficiency in coding languages for DAG (Directed Acyclic Graph) modifications, which can be a barrier for non-technical users.

Solution: DataChannel adopts a low-code approach with a user-friendly drag-and-drop interface. This allows users to make changes to the workflow without the need for expertise in coding languages. Unlike the code-first approach of Airflow, DataChannel enhances accessibility and reduces development time, making it more user-friendly for a broader audience.

Scheduled Job Runs

Challenge: Manually managing buffer times between job runs can be inefficient and prone to errors.

Solution: Data orchestration ensures that each step in the workflow completes before the next one begins, eliminating the need for buffer times. This level of control enhances predictability, reduces trial-and-error, and provides a more efficient and dependable workflow.

Eliminating data silos

Challenge: Without proper orchestration, workflows can become fragmented, leading to inefficiencies and delays.

Solution: Data orchestration results in a more streamlined execution of workflows. As you are able to get all the work done within one tool only, the entire process becomes more efficient, reducing the likelihood of bottlenecks and enhancing overall productivity.

Ensuring Data Freshness

Challenge: Ensuring data quality throughout the workflow is vital, and any compromise in data freshness at any stage can have cascading effects on subsequent steps.

Solution: Data orchestration minimizes the chances of compromising data quality by orchestrating each step and ensuring that data is consistently fresh. With dependencies in place, the workflow becomes a cohesive unit, reducing the risk of poor data quality affecting downstream steps.

Features of Data Orchestration with DataChannel

This is how your typical orchestra will look like within DataChannel. All the workflows are seamlessly integrated and interconnected in a way that every step of the workflow is executed only after the preceding is successful and ready to deliver fresh data for the next step.

“Airflow is dead. It’s almost impossible to learn and even if you’re familiar with it, the hours you spend maintaining it are a waste of life.” -Hugo Lu

Business teams today need a tool that has a gentle slope, is not code-first and makes extracting insights and life easier for non-data engineering teams. DataChannel, with its intuitive drag-and-drop interface, becomes an indispensable asset for businesses of all sizes, catering to teams with varying levels of technical proficiency.

The process of building an orchestra is literally as easy as it is shown in the image, you start with your data sources (extract data via your already configured pipelines), set up relevant data transformation in place (either using SQL or dbt). After having all your data structured and normalized, you can move on to the next step i.e., data activation (via reverse ETL) to activate your data into desired business applications, and then finally enabling dashboarding your data via any BI Tool (Tableau) to closely monitor your performance over a set period of time by leveraging relevant marketing or non-marketing metrics.

The different features that Data Orchestration with DataChannel offers are explained in detail below:

Note: To know more about the features of Data Orchestration, go through our Product documentation on the same.

- Decision Nodes: Decision Nodes are basically used to add conditions in your workflows so as to save unnecessary pipelines/ sync runs. Let’s say you want to ensure you only get notification (via slack or email) for your Amazon Seller Central pipelines only when it retrieves more than 100 rows, that’s when you can leverage decision nodes so that you get notified when the actual work gets done.

- Pipeline Freshness: Pipeline freshness is another outstanding feature of Data orchestration with DataChannel in which our standard requirement is set such that data must not be over 2 hours old between extraction and transformation.

- Number of Tries: If any of your pipeline runs’ fail due to any unforeseen reason, then, DataChannel provides you with the option to define the number of tries for your pipelines before actually showing an error for the run. (The default value within DataChannel is set at 1, this can be increased up to a maximum value of 3).

- Scheduling Interdependent Tasks: Interdependent tasks can be very easily scheduled with Data Orchestration to ensure every step in your workflow works on most recent data. This helps reduce orchestration failure, downtime, and also enhances productivity & ensures minimal error occurrence.

Scalable, Reliable, and Simplified Data Orchestration

DataChannel Orchestration holds the potential to significantly reduce the technical debt associated with outdated data management practices. By providing a structured and standardized approach, it empowers organizations to retire legacy systems and embrace modern, scalable, and secure solutions that align with the demands of today's data landscape.

Try DataChannel Free for 14 days