Top 7 Data Orchestration Tools (For 2026)

More than 87 percent of organizations are classified as having low business intelligence (BI) and analytics maturity, according to a survey by Gartner. Organizations with a poor BI maturity often lack a unified data and analytics strategy with a clear vision. Each business unit undertakes data or analytics projects individually, which eventually results in data silos & inconsistent processes.

Not only that, but data teams also struggle with managing a mix of tools, weak scripts, and quick-fix issues just to move data between numerous business systems and databases. Much of their daily effort goes into keeping these pipelines running one more day at a time. This hesitation to adopt new approaches has become a real obstacle, slowing teams down, limiting their ability to adapt, and adding unnecessary costs.

Data Orchestration tools help you streamline your workflows, eliminate data silos, and build a more efficient infrastructure. In this blog, we’ll discuss the Top 7 Data Orchestration Tools. We have come up with this list after evaluating multiple tools on different evaluation criteria, so that you can make the right choice while choosing the right orchestration tool for your business.

Components of Data Orchestration

Data Orchestration can involve the following key components:

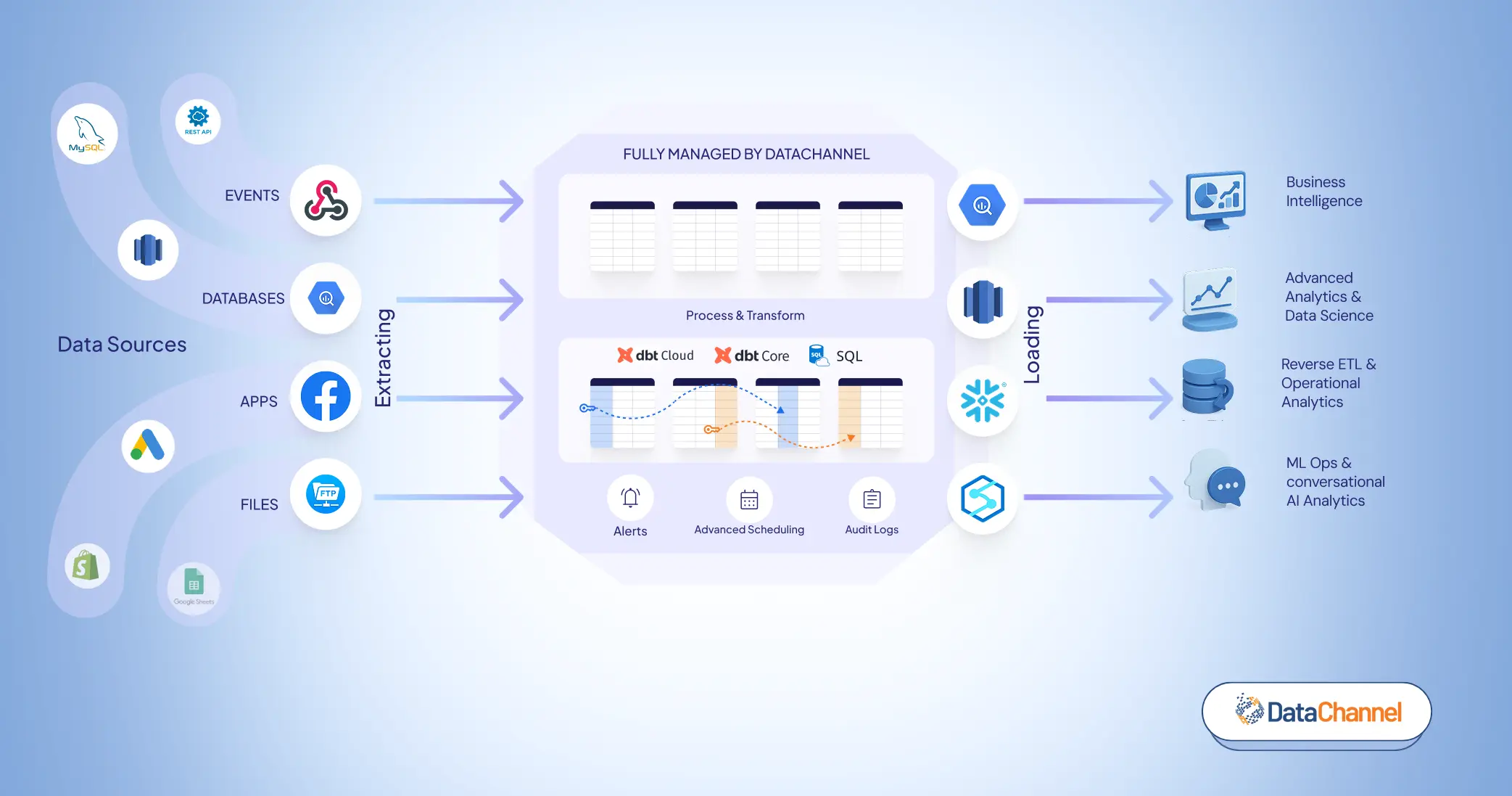

- Data Extraction: Raw data is collected from OLTP databases or third-party APIs. ELT tools organize and load it into a data warehouse.

- Data Loading: Data is stored in databases, warehouses, or lakes, preparing it for transformation.

- Data Transformation: Data is cleaned, aggregated, and standardized. Duplicates and outliers are removed, ensuring consistency across sources.

- Data Activation: Processed data is sent to downstream applications (Reverse ETL) for immediate use, such as targeted marketing campaigns.

Top 7 Data Orchestration Tools

DataChannel

Airflow

Astronomer

Dagster

Prefect

Metaflow

Azure Data Factory (ADF)

DataChannel

DataChannel

Data orchestration with DataChannel offers a practical way to tackle the ongoing challenges data teams face. Instead of dealing with scattered tools, complex scripts, and quick-fix systems, it helps bring data workflows together in one place and makes them easier to manage.

Data orchestration with DataChannel includes the following features:

- Decision Nodes: Decision Nodes are basically used to add conditions in your workflows so as to save unnecessary pipelines/ sync runs. Let’s say you want to ensure you only get a notification (via Slack or email) for your Amazon Seller Central pipelines only when it retrieves more than 100 rows. That’s when you can leverage decision nodes so that you get notified when the actual work gets done.

- Pipeline Freshness: Pipeline freshness is another outstanding feature of Data orchestration with DataChannel, in which our standard requirement is set such that data must not be over 2 hours old between extraction and transformation.

- Number of Tries: If any of your pipeline runs fail due to any unforeseen reason, then DataChannel provides you with the option to define the number of tries for your pipelines before actually showing an error for the run. (The default value within DataChannel is set at 1; this can be increased up to a maximum value of 3).

- Scheduling Interdependent Tasks: Interdependent tasks can be very easily scheduled with Data Orchestration to ensure every step in your workflow works on the most recent data. This helps reduce orchestration failure, downtime, and also enhances productivity & ensures minimal error occurrence.

Some of the benefits that DataChannel offers when it comes to data orchestration:

- Scalability: Data orchestration is a cost-effective way of automating synchronization across data silos, enabling organizations to scale data use.

- Monitoring: Automating data pipelines and enabling alerts for success/ failure allows easy monitoring and also helps in quickly identifying & troubleshooting issues compared to using scripts and disparate monitoring standards.

- Real-time information: Automated data orchestration allows for real-time data analysis or storage since data can be extracted and processed at the moment it’s created.

- Faster insights: Data orchestration streamlines data workflows so you can get business intelligence and actionable insights fast.

G2 Reviews: 4.8 out of 5

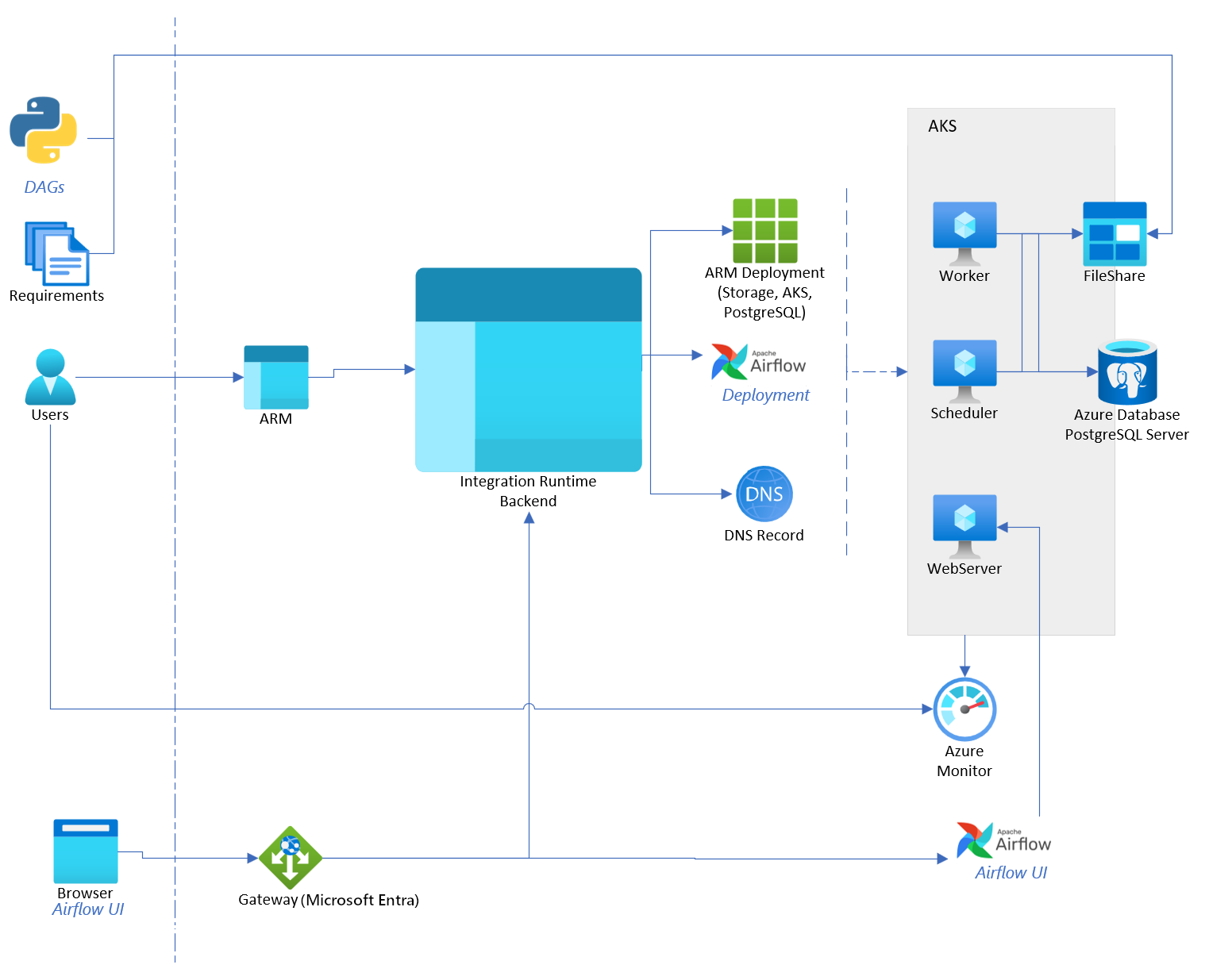

Airflow

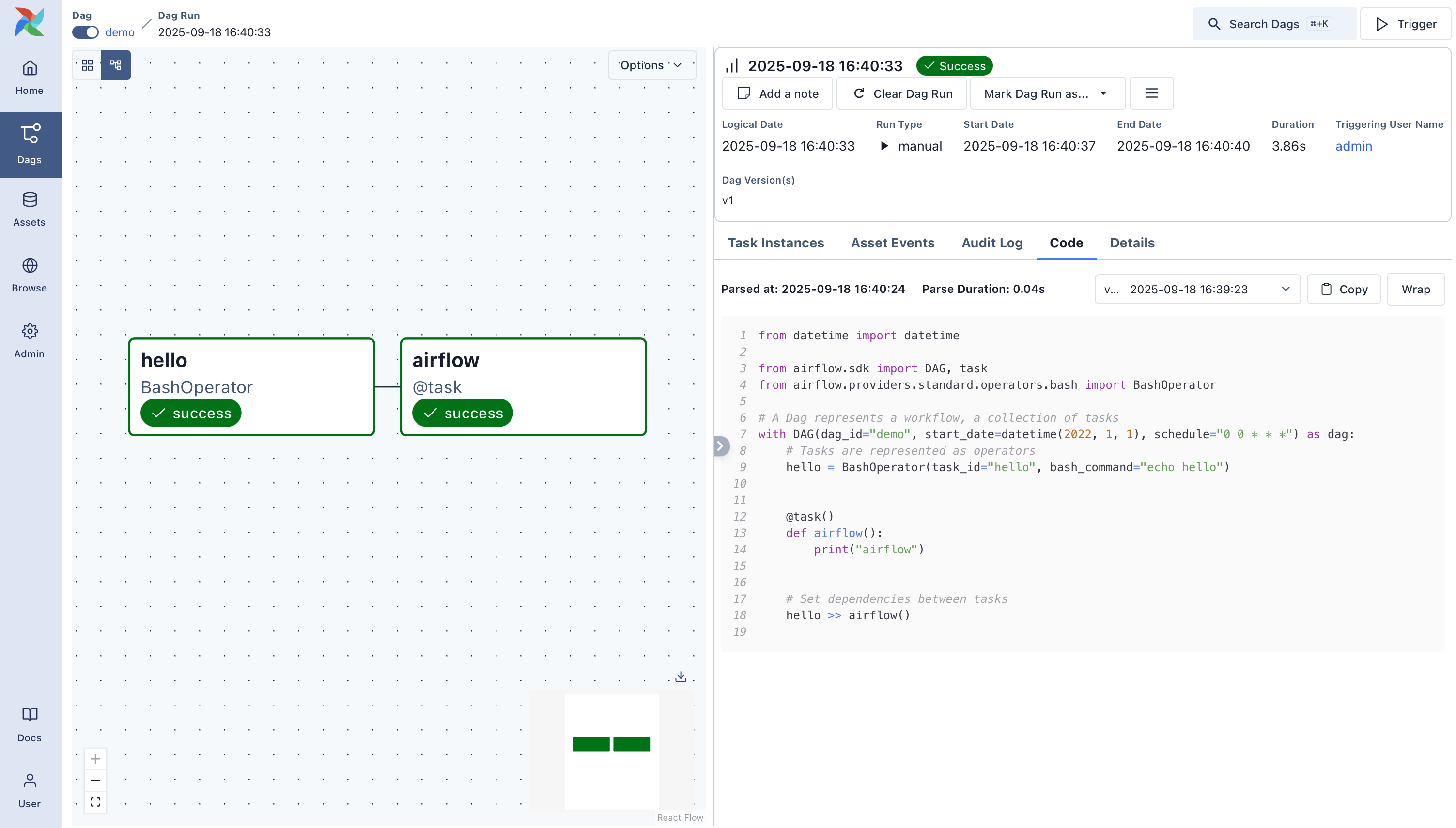

Apache Airflow is an open-source platform used to build, schedule, and monitor data workflows. It allows teams to define pipelines as Python code and orchestrate tasks across different systems through DAGs (Directed Acyclic Graphs). Airflow is widely used for managing data pipelines, batch processing jobs, and analytics workflows.

Pros

- Pipelines are defined in Python, enabling dynamic workflow creation and easy version control.

- Large ecosystem of operators, hooks, and integrations that connect with databases, cloud services, and other data tools.

- Built-in web UI for monitoring DAG runs, task logs, retries, and workflow dependencies.

Cons

- Initial setup and infrastructure management can be complex in production environments.

- Debugging complex DAGs and managing dependencies across tasks can become difficult as pipelines grow.

G2 Reviews: 4.5 out of 5

Astronomer

Astronomer (Astro) is a managed platform built around Apache Airflow that helps data teams develop, run, and monitor pipelines at scale. It provides a fully managed environment for Airflow, taking care of infrastructure, deployment, scaling, and upgrades so teams can focus on building reliable data workflows and delivering insights.

Pros

- Managed Airflow environment that handles infrastructure, scaling, and upgrades automatically.

- Docker-based development allows teams to test changes, dependencies, and version upgrades locally before pushing them to production.

- Built-in observability and lineage capabilities help teams track pipeline health, dependencies, and data flow.

Cons

- Still relies heavily on Airflow expertise, which can make adoption challenging for teams new to orchestration.

- Costs can scale up for larger deployments compared to self-managed Airflow.

- Advanced monitoring and alerting may require additional configuration or integrations depending on the team’s setup.

G2 Reviews: 4.6 out of 5

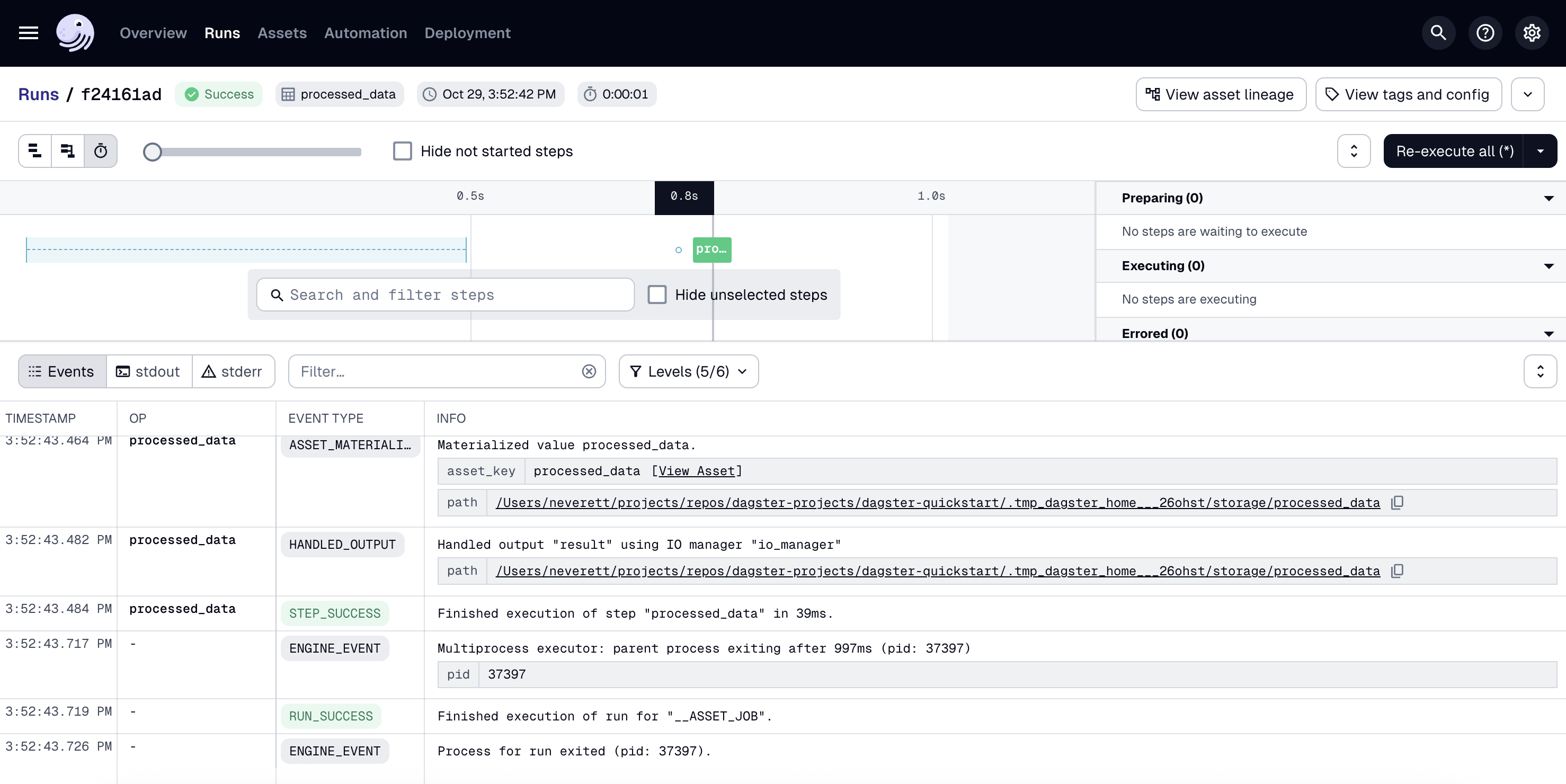

Dagster

Dagster is a modern data orchestration platform designed for building, running, and monitoring data pipelines with a strong focus on data assets. Instead of thinking only in terms of tasks, Dagster encourages teams to define data assets and the relationships between them. Its framework uses Software-Defined Assets (SDAs) to give teams clearer visibility into how data is produced, transformed, and consumed across pipelines.

Pros

- Reusable and modular components help reduce repetitive coding and make pipelines easier to maintain.

- Asset-based architecture (SDAs) provides better visibility into data dependencies, scheduling, and pipeline execution.

- Strong developer experience with built-in testing, data quality checks, and CI/CD-friendly workflows.

Cons

- Pricing for the managed Dagster Cloud may be expensive for smaller teams.

- Asset-based concepts may require some adjustment for teams used to traditional task-based orchestrators.

G2 Reviews: 4.7 out of 5

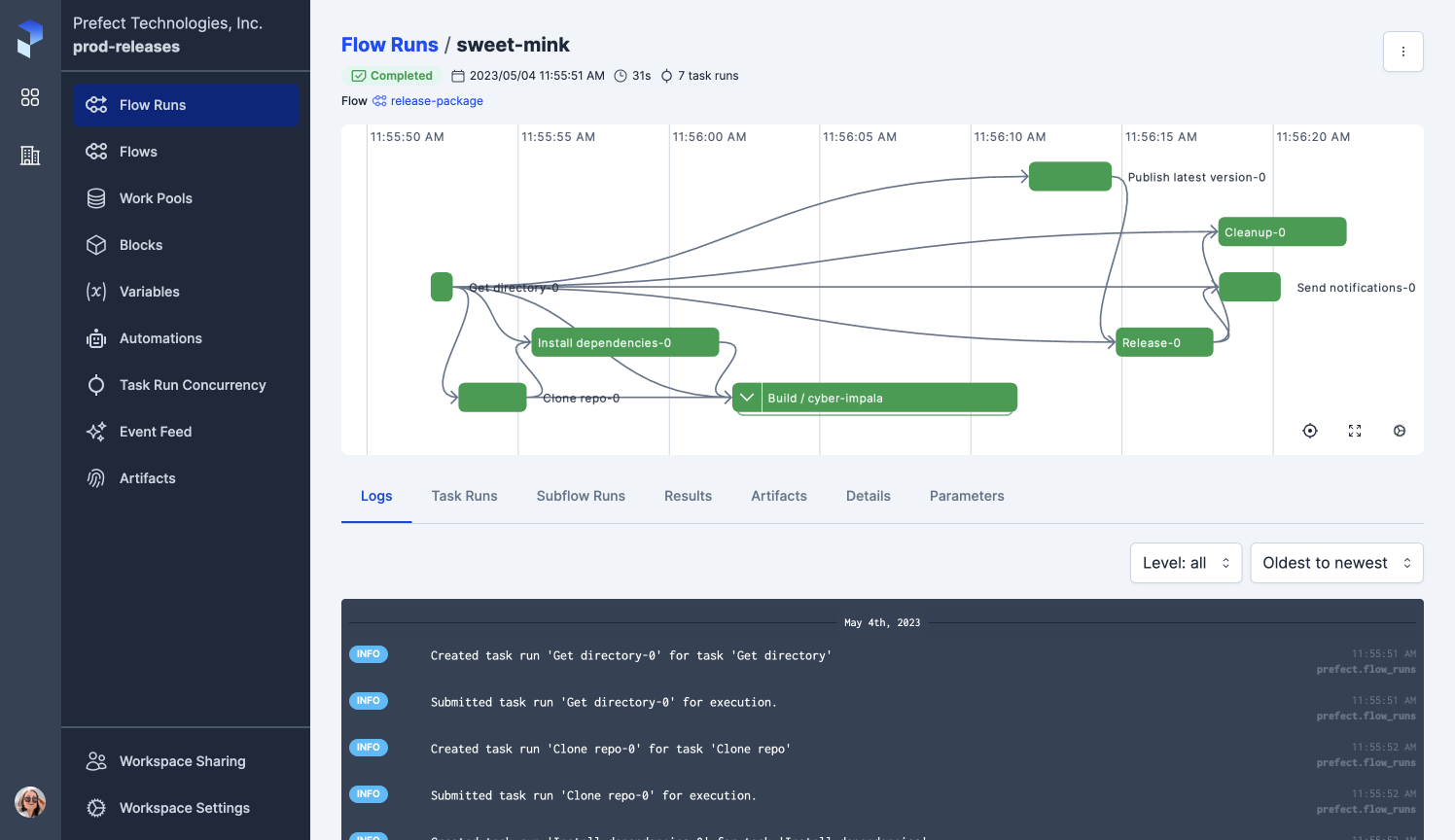

Prefect

Prefect is a modern workflow orchestration platform designed to build, run, and monitor data pipelines with minimal overhead. It offers both CLI and UI interfaces and focuses on a developer-friendly experience by allowing workflows to be written directly in Python without rigid DAG constraints.

Pros

- Python-native framework that allows developers to write workflows using standard Python without complex boilerplate.

- Flexible orchestration model supporting time-based and event-driven scheduling.

- Built-in observability tools for monitoring workflow runs, logs, retries, and task states.

Cons

- Frequent feature updates and version changes can sometimes require adjustments in existing workflows.

- Some advanced capabilities are tied to Prefect Cloud, which may add cost depending on scale.

G2 Reviews: 4.5 out of 5

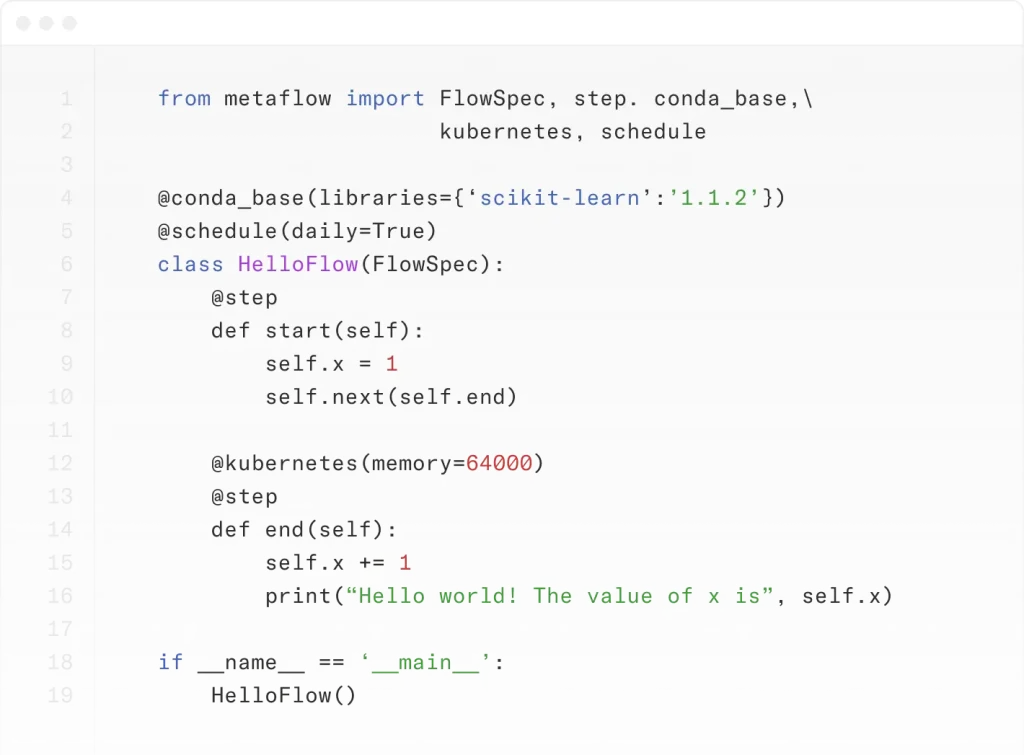

Metaflow

Metaflow is an open-source Python framework developed by Netflix to simplify building and managing data science, machine learning, and AI workflows. With built-in support for versioning, dependency management, and parallel execution, Metaflow streamlines workflow automation while integrating with cloud services like AWS for efficient scaling and deployment.

Pros

- User-friendly interface that abstracts complex infrastructure management, allowing data scientists to focus on model development.

- Seamless integration with cloud services like AWS facilitates effortless scaling and deployment.

Cons

- Limited native support for platforms other than AWS, which may require additional configuration for users of other cloud providers.

G2 Reviews: 4.6 out of 5

Azure Data Factory

Azure Data Factory is a cloud-based data integration and orchestration platform from Microsoft. It allows teams to build, schedule, and manage data pipelines that move and transform data across cloud and on-premise systems.

With a visual interface, built-in connectors, and native integration with the Azure ecosystem, it also helps organizations handle ETL and ELT workloads at scale.

Pros

- Large library of connectors that integrate with databases, SaaS tools, and cloud storage services.

- Visual pipeline builder that makes it easier to design workflows and transformations without extensive coding.

- Strong integration with the Azure ecosystem, including services for analytics, storage, and machine learning.

Cons

- Costs can increase quickly for large data volumes or high-frequency pipelines.

- Monitoring and debugging complex pipelines can sometimes require multiple Azure tools.

G2 Reviews: 4.6 out of 5

Conclusion

After comparing the top 7 data orchestration tools for 2026 across factors like pricing, scalability, low/no-code capabilities, integrations, and customer feedback, DataChannel stands out as a strong option.

It brings together data aggregation, transformation, and activation in one place, making it easier for teams to manage their data workflows without juggling multiple tools. With integrations across 100+ platforms and strong API support, DataChannel gives organizations the flexibility to build pipelines that fit their specific data stack and use cases.

Try DataChannel Free for 14 days