.webp)

Top 7 Data Orchestration Tools: 2024

Gone are the days when data teams had to manually schedule, monitor & maintain cron jobs for orchestrating pipelines. The automation era has ushered in efficiency, reliability, and scalability in managing data workflows. With the rise of first-generation tools like Airflow, the concept of Directed Acyclic Graphs (DAGs) emerged, streamlining batch scheduling. However, monitoring and error logging remained a challenge until the advent of second-generation orchestration tools. These Python-first platforms offered programmatic workflow management, addressing the growing complexity of data operations.

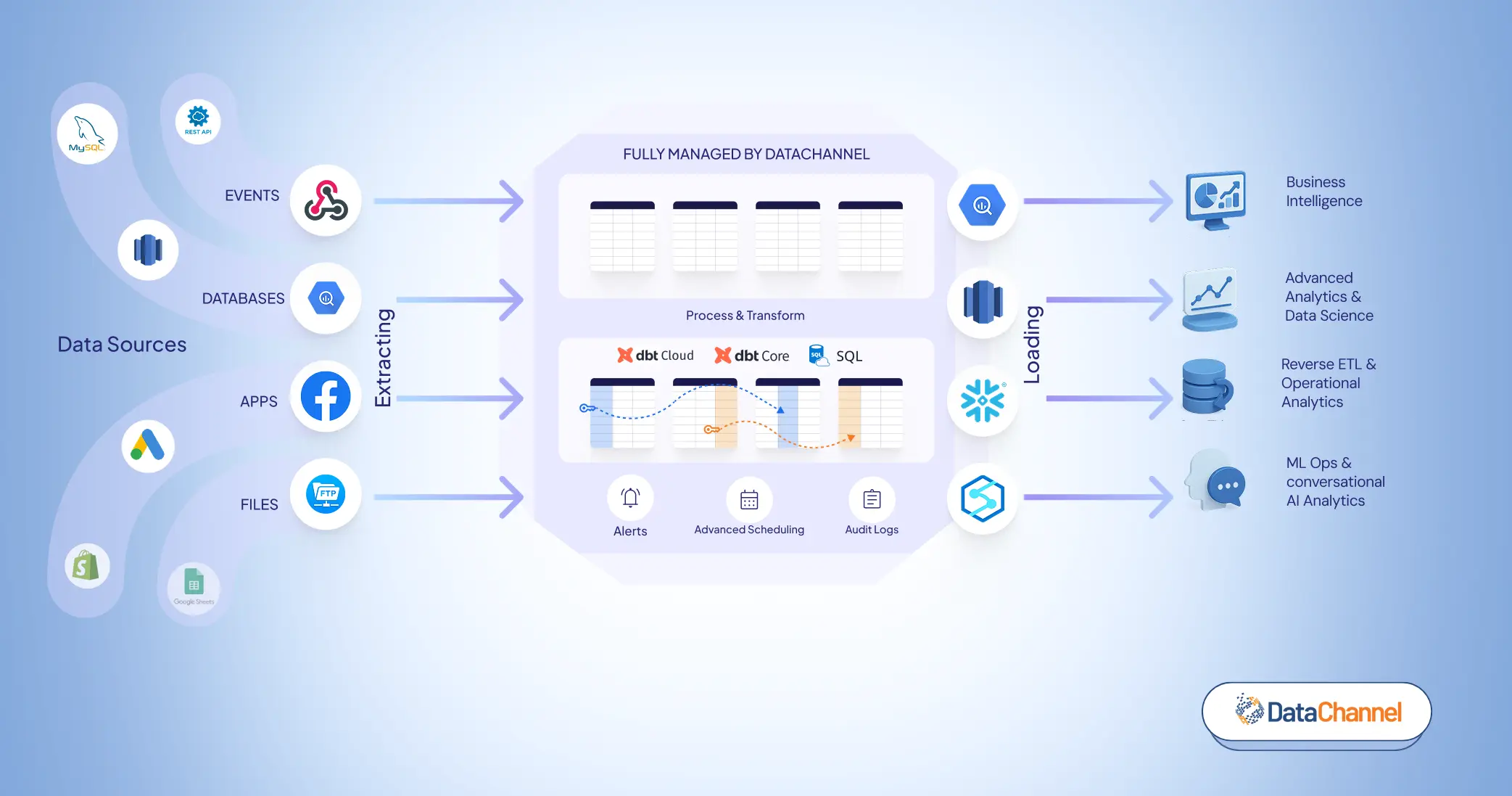

As the demand for seamless collaboration between tech and non-tech teams surged, the necessity for no-code solutions became apparent. Enter modern data orchestration tools like DataChannel. With their intuitive low-code framework, businesses of all sizes and backgrounds can leverage powerful functionalities effortlessly. Whether it's building DAGs, configuring pipelines, executing personalized marketing campaigns at scale, or transforming data through SQL or dbt, DataChannel empowers users to achieve their goals efficiently.

In this blog, we explore the top 7 data orchestration tools of 2024, categorized into first-generation, second-generation, and modern solutions.

Also Read:

Moving on to the terminologies related to data orchestration that are used heavily:

- A workflow is a process that contains 2 or more steps.

- Workflow orchestration is the coordination of tasks across infrastructure, systems, triggers, and teams.

- DAGs: “Directed” means that each edge has a defined direction, so each edge works in a unidirectional flow from one vertex to another. “Acyclic” means that there is no repetition (i.e., “cycles”) in the DAG, so that for any given vertex if you follow an edge that connects that vertex to another, there is no path in the DAG to get back to that initial vertex.

- Workspaces: Workspaces (within DataChannel) allows you to create completely isolated working areas which will share only Billing and pipeline templates and are otherwise completely independent of each other. This can be used by Agencies, Service providers or multi brand companies to manage the client / brand data and pipelines independent of each other and move data from the same data source to multiple data warehouses / destinations in parallel.

First-Generation Orchestration Tools

1. Airflow

Apache Airflow™ (developed by and for Airbnb) is an open-source platform for developing, scheduling, and monitoring batch-oriented workflows. Airflow’s extensible Python framework enables you to build workflows connecting with virtually any technology. A web interface helps manage the state of your workflows. Airflow is deployable in many ways, varying from a single process on your laptop to a distributed setup to support even the biggest workflows.If you are a user who prefers clicking over coding, then Airflow might be the right tool for you. Being a first-generation data orchestration tool, Airflow is coding-first and is almost completely based on Python.

Pros-

- Pipelines are configured as Python code, allowing for dynamic pipeline generation.

- Airflow’s infrastructure uses operators which can be easily adjusted to your project or environment needs. You can find more about Airflow operators here.

- Best tool for users who love Python (as a coding language).

Cons-

- Very steep learning curve for users who are new to data (workflow) orchestration

- Not the right fit for streaming data (works best for REST based data)

- DAGs need to be declared in a fixed pattern or scheduling related options increase manifold.

G2 Rating: 4.4 out of 5

2. Astronomer (Bonus Tool built with Airflow)

Astronomer’s flagship product – Astro is built around Airflow with the purpose to enable enterprises to leverage Airflow-as-a-Managed-Service. Astronomer (Astro) is the right tool for data teams looking to increase the availability of trusted data. Astro enables data engineers, data scientists, and data analysts to build, run, and observe pipelines-as-code. Astronomer handles the infrastructure related concerns for businesses to help redirect their focus on data-driven decisions and insights. One of the mission statements from Astro also reflects this, “Cultivating the organic growth of the Airflow open source project and its community.”

Pros-

- Proficient customer support consisting of feature rich development processes.

- Some users on G2 have also claimed that Astronomer is the only feasible managed service available for Airflow currently.

- Docker based development that enables users to test version upgrades and other updates first on local machines or folders before deploying them on production servers.

- Maintaining Data lineage is a big plus which is very limited with Airflow alone.

Cons-

Pricing can scale very rapidly which is sometimes also not in proportion with increasing set up requirements.

Monitoring data pipelines is a bit tricky as the business logic for the same is not defined and users have to build one customly for data/ pipeline monitoring purposes.

Pricing-

Pay-as-you-Go: from $0.35/hr per Deployment

G2 Rating: 4.6 out of 5

3. Luigi

Luigi (initially developed by Spotify for Spotify) is the OG tool, developed even before Airflow when it comes to complex data orchestration. It offers outstanding opportunities for developing and monitoring data to simplify the duties of the developers, helping them cut down on manual efforts. Luigi being a pioneer orchestration belongs to an era before DAGs came into picture, so the users (particularly tech teams) take some time to familiarize themselves with the tool’s UI.

Features-

- Complex Infrastructure: Luigi uses complex infrastructure, including A/B test analysis, internal dashboards, recommendations, and external reports to manage complex tasks.

- User-friendly Web Interface: With Luigi, you can search, filter, and prioritize seamlessly.

- Open-source: Because it’s open-source, Luigi is a great option for developers.

Pros-

- Ideal for heavy infrastructures as the tool is handy in complex situations

- You can integrate multiple tasks in a single pipeline as DAGs are not involved.

Cons-

Scalability issues

While DAGs improved workflow representation, monitoring and error handling posed persistent challenges. The need for more robust solutions to track and manage pipeline executions became evident.

Second-Generation Tools

4. Dagster

Positioning itself as the next-gen tool for data orchestration, Dagster was launched in 2019 by Elementl. With Dagster, you can focus on running tasks, or you can identify the key assets you need to create using a declarative approach. Embrace CI/CD best practices from the get-go: build reusable components, spot data quality issues, and flag bugs early. One of the USPs of Dagster is the use of Software-Defined Assets (SDAs) in their framework. Working with SDAs is not mandatory, but they add a whole new dimension to your orchestration layer. SDAs allow you to:

• Manage complexity in your data environment.

• Write reusable, low-maintenance code.

• Gain greater control and insights across your pipelines and projects.

Pros-

- Reusable coding feature saves time from manual repetition

- Convenient learning curve when compared to first-gent tools

- SDAs give more control into pipeline scheduling, running, and monitoring.

- Embedded functionalities and integrations related to ETL, dbt & Airflow

Cons-

- Pricing falls more on the higher side (not suitable for SMEs)

Pricing-

Starter Plan: 30,000 credits/ month, $0.03 per additional credit

5. Prefect

A second-generation orchestration tool, Prefect offers both CLI & GUI interface. Built especially for seasoned developers and those starting out their journey with orchestration. With Prefect, you can orchestrate your code and provide full visibility into your workflows without the constraints of boilerplate code or rigid DAG structures. The platform is built on pure Python, allowing you to write code your way and deploy it seamlessly to production. Prefect’s control panel also offers scheduling, automatic retries, and instant alerting, ensuring you always have a clear view of your pipelines and workflows.

Pros-

Minimal setup requirements, scalability, versatile time-based or event-based scheduling, and observability features.

Cons-

Documentation is not extensive and detailed

Tool related updates & new releases lead to frequent breaking of production pipelines and workflows.

Pricing: Pro Plan: $405/Month

Modern Data Orchestration Tools

6. DataChannel

Marking the paradigm shift in the data orchestration landscape by bringing in the much needed no-code, drag-n-drop interface into the picture. DataChannel is an all-encompassing modern data orchestration tool that does it all from data extraction, transformation to activation.

Unlike integration related limitations that the first or second generation tools come inherently bundled with, DataChannel overcomes these limitations pretty easily. DataChannel offers 100+ integrations for both ETL & Reverse ETL functionalities.

Its highly scalable & reliable infrastructure enables teams of all sizes to work their way around with unique data needs easily. Moreover, its no-code interface allows business professionals of all backgrounds to make the most out of DataChannel.

Data Orchestration, though, being a new feature from DataChannel is already pretty advanced when it comes to features such as-

- Building unbreakable workflows using DAGs

- Instant logs & alerts for successful completion or failure of a workflow

- Integration with dbt core & dbt cloud to enable smooth version control & eliminate repeatability for transformations

- Compliance to GDPR & HIPAA

- Detailed documentation for every integration and new feature

- Best-in-class customer support

Other Useful Features:-

API Access

A tool that offers its own API integration so that developers can programmatically move their data from source to target. A tool that offers access to public API while offering the same features and without compromising on the privacy front is the tool that your business will need. Ability to programatically control power of DataChannel is what sets it apart form the competition. Learn more about DataChannel’s Management API here.

ETL & Reverse ETL

DataChannel combines the power of both ETL & Reverse ETL in one tool. Imagine getting all the integrations you need with different source & target systems to complete your orchestration workflows. If it is data driven efficiency in functioning that you desire and you want to maximize the return on investment in your data teams, then there is no escaping the fact that you will need to bring in the missing piece of Reverse ETL in your data stack. Your competitors are most probably already embarking on this path and unless you do so too, you will miss out on the exponential growth it can bring. You get both these functionalities at one place without having to pay for anything extra, the best part — businesses of all sizes can leverage DataChannel to maximize their operational efficiency.

Managed Data Warehouse

Managing a data warehouse requires both expensive IT resources and trained manpower for its administration and troubleshooting. In case you do not yet have the in-house expertise or do not want to divert your team's time and energy towards this task, you can rely on us to manage your warehouse for you. You can select from one of the two cloud warehouse options available when you choose us to manage your data for you.

Pros-

- Easy-to-use platform, and connectors will work like magic

- Helps manage our warehouse, when we have little data infrastructure expertise

- Custom connectors built on priority

- Works well for businesses of any size owing to its flexible & transparent pricing

- Data Orchestration is offered at no extra cost

Cons-

Owing to not being a coding-first tool, might not be the best choice for developers looking for a Python-first experience. However, code enthusiasts can definitely enjoy the almost limitless fine grained controls offered in the platform.

Pricing: Professional Plan: $250/ Month

G2 Review: 4.8 out of 5

7. Shipyard

Shipyard is a tool known for offering seamless data sharing, and provides on-demand triggers, automatic scheduling, and built-in notifications with no need for code configuration or similar adjustments. Shipyard further comes packed with streamlined data workflows with detailed history logging and over 50 low-code integrations. It also offers data orchestration with third-party tools like Lambda, Zapier, and dbt Cloud, ensuring flexibility and efficiency in managing your data ecosystem.

Pros-

- Shipyard can solve complex data orchestration in less than 5 minutes

- Share repeated or adjusted solutions with other members of the group in real-time

Cons-

May require some time to understand the way it works

Pricing: Team Plan: $300 + runtime /month

G2 Reviews: 4.6 out of 5

Evaluation Criteria for the right data orchestration tool

Scalability : When choosing the right data orchestration platform it is imperative that you find the tool that is scalable. In simple terms, you need a platform that scales alongside your growing data needs without throwing too many infrastructure related issues and maintains the same ease of use with increasing deployments and usage.

Pricing : A platform that offers transparent pricing is the need, the right data orchestration tool provides you with clear usage + integrations' based pricing. For SMEs even a small change in the rows based or consumption based pricing can lead to a big change in the pricing. Giving the final go on the right data orchestration tool is a big leap and a tool that covers all your data orchestration needs related to ETL, Reverse ETL and data transformation without affecting the pricing too much is the right tool for you.

Integrations Offered : Businesses need a tool that offers robust integrations with data sources as well as destinations. Not only that, businesses even need support for custom connectors for their unique business needs and a team that is prompt enough to build the right custom integration for their client is the best one to go ahead with.

Documentation : A documentation that works well for both the tech and the non-tech teams is the documentation that you’ll need. From setting up the right integration from scratch to migrating from one data warehouse to another, extensive documentation always comes handy. A well laid out documentation that gives you in-depth details around all the source system & destination related parameters is the one that will best suit your business.

Code/ No Code : In our blog we covered in detail both the code and the no-code data orchestration platforms. Based on your team requirements you’ll decide whether you need a code-first or code-free tool for your orchestration needs.

Troubleshooting & Error logging : Error logging and troubleshooting is another important parameter that will help you in both short & the long run. Getting notifications for successful completion of your orchestration runs is important but understanding why any of your orchestration failed or more specifically why any of your workflow within an orchestration didn’t work out as planned is also equally important.

Customer Support & Reviews : Last but never least is a prompt customer support team. A support team that is willing to answer or resolve any of your queries without much nudging is a team that will work best for you. When starting your journey with a new tool you’ll need supportive customer reviews as well, platforms such as G2 help you find the best customer reviews out there that will aid your hunt for the right data orchestration tool. Also, when starting with a new tool, if the support team is not available to answer your questions then the learning curve will pose a big challenge for you.

Data Orchestration with DataChannel

Based on the evaluation criteria mentioned above DataChannel stands out as the ultimate data orchestration tool, and here's why. In terms of functionalities and scalability, DataChannel accelerates your results by efficiently aggregating, transforming, and activating data directly on top of your single source of truth.

With integrations to over 100+ and robust API support, DataChannel also gives you the flexibility to build custom data pipelines tailored to your needs. Whether you prefer low code or a no-code approach, DataChannel empowers you to effortlessly create complex end-to-end ELT & Reverse ETL pipelines in no time.

Try DataChannel Free for 14 days